The Prisma App

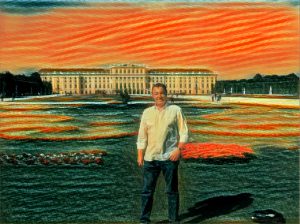

Hands-down, Prisma was the App of the Year for 2016. For those of you who have not heard about the app, Prisma allows you to turn your photos into works of art. The app is available for Android or IOS. And the app could not be simpler to use. Take a picture with your phone or choose a photo from your album. Then choose from a range of different art styles designed to emulate famous artists like Claude Monet and Picasso. In a matter of seconds your photo looks like it had been painted rather than shot with a camera. The app was created by Alexey Moiseenkov who also founded the Prisma labs, based in Moscow. Take a look at the before and after photos of a picture of my spouse at Schönbrunn Palace in Vienna, Austria.

Picture before Prisma App

Photo after Prisma App

But the fun doesn’t stop there. Late last year Prisma labs launched an update to turn videos into moving art. Users can import a video file from their phone’s camera roll or take one within the app. The technology applies a specific style to each frame of the video, up to 15 seconds long. To process the video, the app analyzes an image’s pixels and rearranges them to achieve a desired effect. The computer attempts to act like an artist, interpreting real-life scenes. Depending on which iPhone model users have, it can take about 35 seconds (iPhone 7) to two minutes (iPhone 6) to edit a clip. However, while the app makes still photos look amazing, the video feature I found less succesful. The videos looked like I was getting noise interference from an old tube style television.

Deep Neural Network

Now if you want to see an impressive display of video art, check out the work of Danil Krivoruchko. His work on NYC Flow is mesmerizing.

To achieve this masterpiece, Danil Krivoruchko used an open source deep neural network. I am going to do my best to explain deep neural network learning. If you still don’t understand, then ask your kids or your IT Guy at work. Hopefully your IT Guy isn’t Nick Burns from SNL.

Neural networks are a set of algorithms, modeled loosely after the human brain. These networks are designed to recognize patterns. They interpret sensory data through a kind of machine perception, labeling or clustering raw input. The patterns they recognize are numerical, whether they are images, sound, text or time series. Once recognized they are translated. The deep leaning refers to the fact the neural networks are “stacked” or in other words layered. The layers consist of nodes. A node is just a place where computation happens, loosely patterned on a neuron in the human brain, which fires when it encounters sufficient stimuli. Alright enough of that technical stuff. If you want to see more of Danil’s work, check out his Instagram page.